Need Legal? Click Zegal

Straightforward legal tech to quickly

Sign

Create

Edit

any contract

2,000+ legal document templates

Trusted by Over 20,000 Companies

1 million+ contracts created with Zegal

Legal Contracts & Document Templates

Need a contract? Join the thousands who trust Zegal daily to keep costs low and compliance high.

No legal knowledge required.

What is Zegal?

Zegal is award-winning legal tech that makes contract creation easy.

Join Zegal now and get your first legally-binding template FREE.

Popular Legal Templates

Zegal has over 2,000 legal templates, covering every aspect of business and trade.

World-Class legal tech

We’ve devoted over a decade to perfecting the ultimate legal app, and it’s ready for you to leverage.

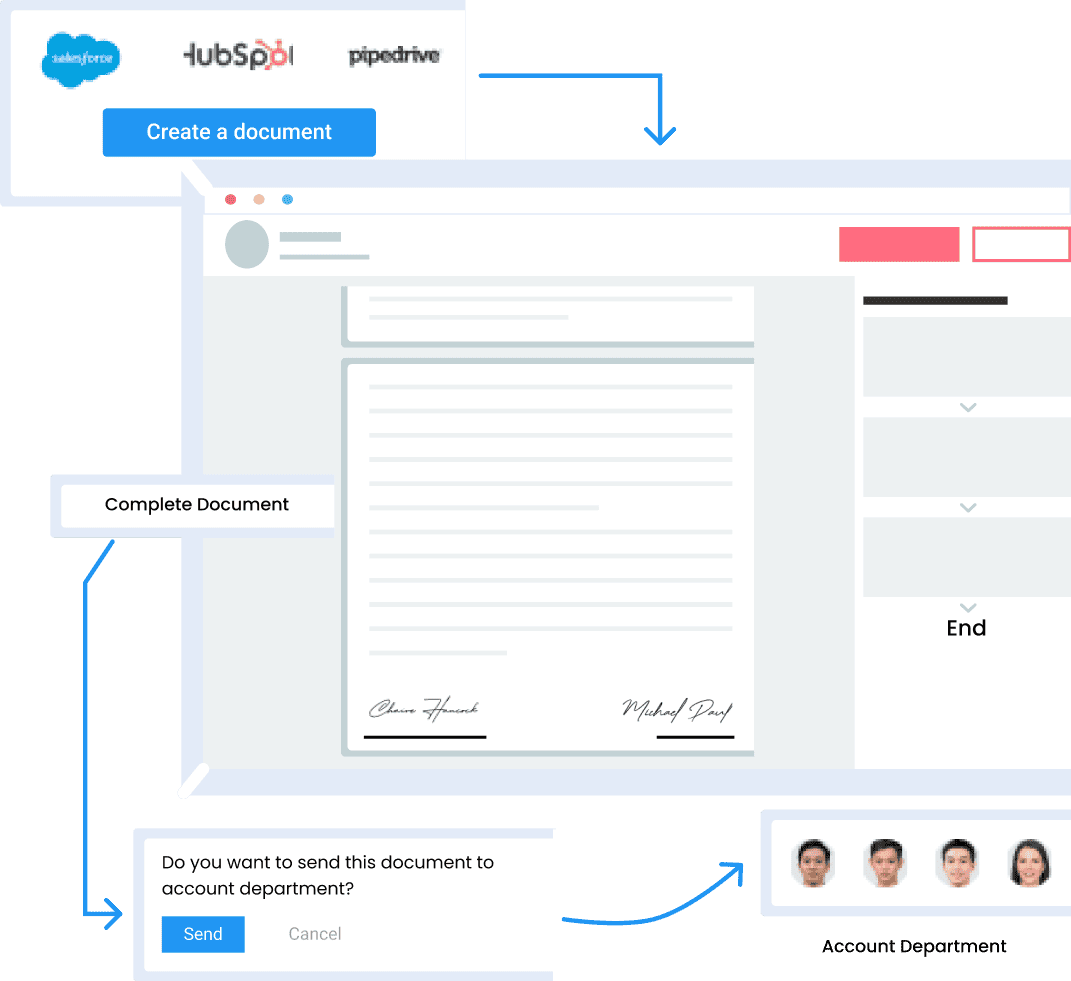

Answer a few simple questions about your needs, and the Zegal document builder will do the rest—creating an iron-clad contract in minutes.

Easy-to-use and legally compliant

Zegal legal templates cover a wide array of business scenarios, from freelance to large enterprise.

The intuitive legal tech software guides users step-by-step to ensure accuracy and legal compliance.

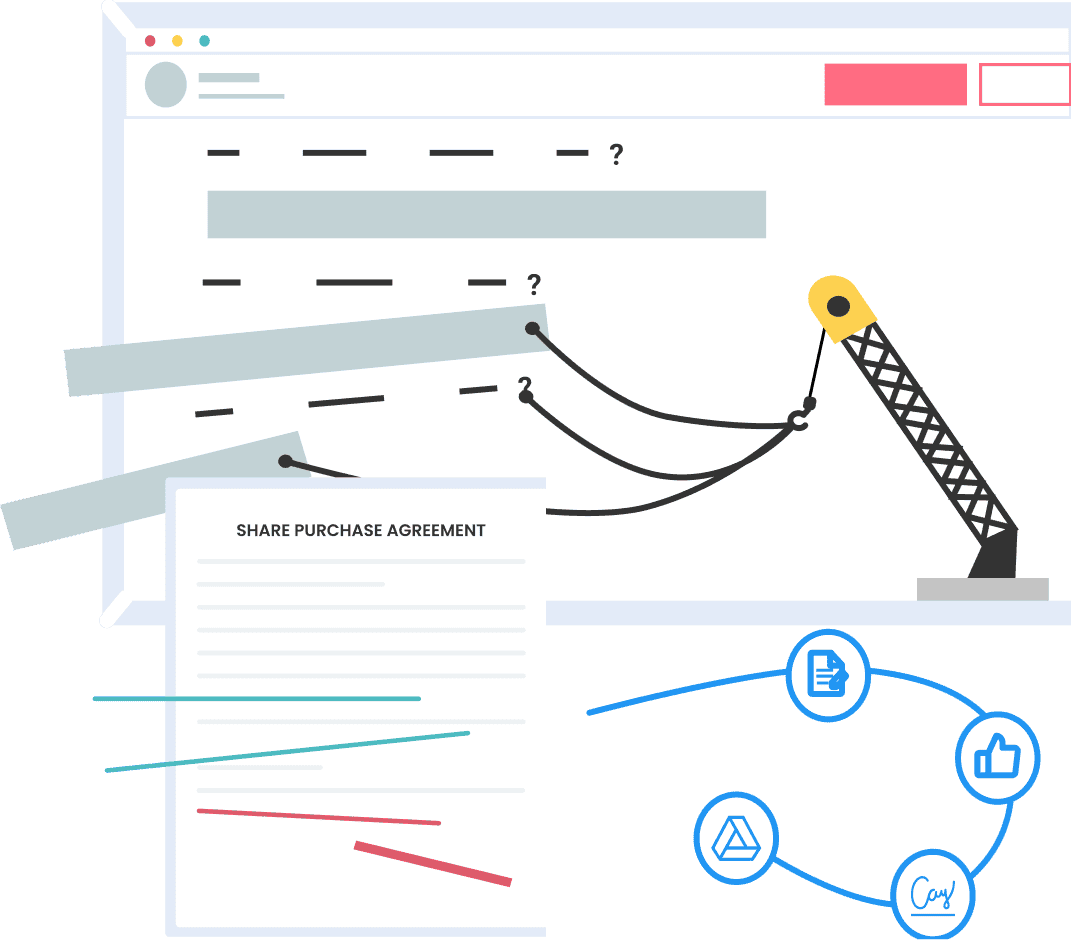

Cutting-edge CLM software

Need more than a contract? Our CLM software combines traditional legal expertise with cutting-edge technology.

Embrace automation, AI-driven analytics, and real-time collaboration to supercharge your contract management processes.

Get Your First Doc Free

Zegal templates are quick to build and entirely legally binding.

Sign up with a business email, and we’ll give you 7 days to look around the app and create your first legal document completely free.

Choose from over 2,000 templates. No credit card required.

Why join Zegal?

“Zegal is easy to use, affordable and the platform is simple to navigate which makes the process of putting together a document fast and fuss-free.”

“Love the new flow/design, very quick and easy to use now. I have done 2 or 3 customer contracts in a flash over the past 2 days.”

“Consistently positive experiences with Zegal’s technology, and customer services teams, who ensure that our issues or questions are responded to immediately.”

"Using Zegal allows us to take a lean and efficient approach that cuts costs while maximising results."

"Zegal is easy to use and customer service is responsive and helpful! I strongly recommend it!!"

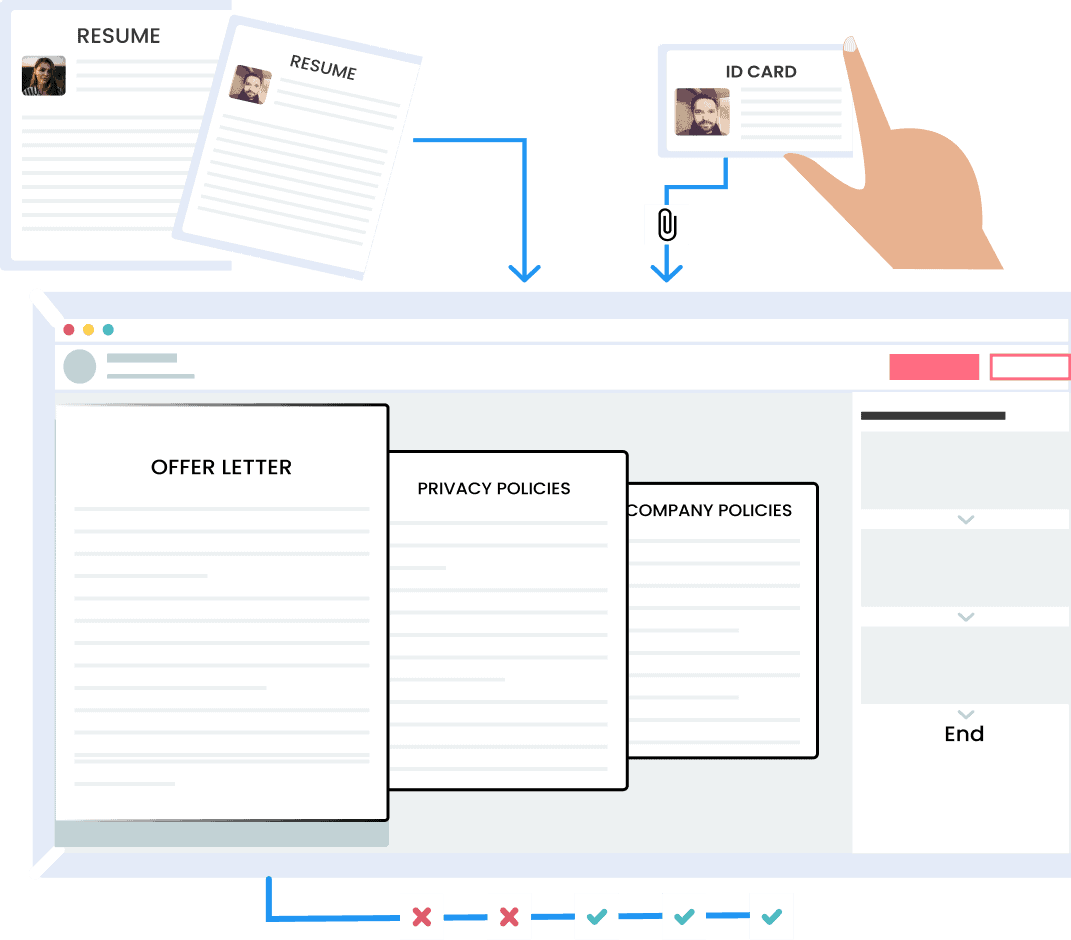

"Zegal makes onboarding a new client or employee fast and simple."

“Zegal really works well for all our legal documentation needs, and it is also user-friendly and mobile at the same time.”

“Zegal is like my teammate, helps me draft the right template, quickly gets my work done, and also saves me money on legal needs.”

“Zegal has been such a great help in my business operations.”